i care about the harm that ChatGPT and shit does to society the actual intellectual rot but when you don't really know what goes on in the black box and it exhibits 'emergent behavior' that is kind of difficult to understand under next token prediction (i keep using Claude as an example because of the thorough welfare evaluation that was done on it) its probably best to not completely discount it as a possibility since some experts genuinely do claim it as a possibility

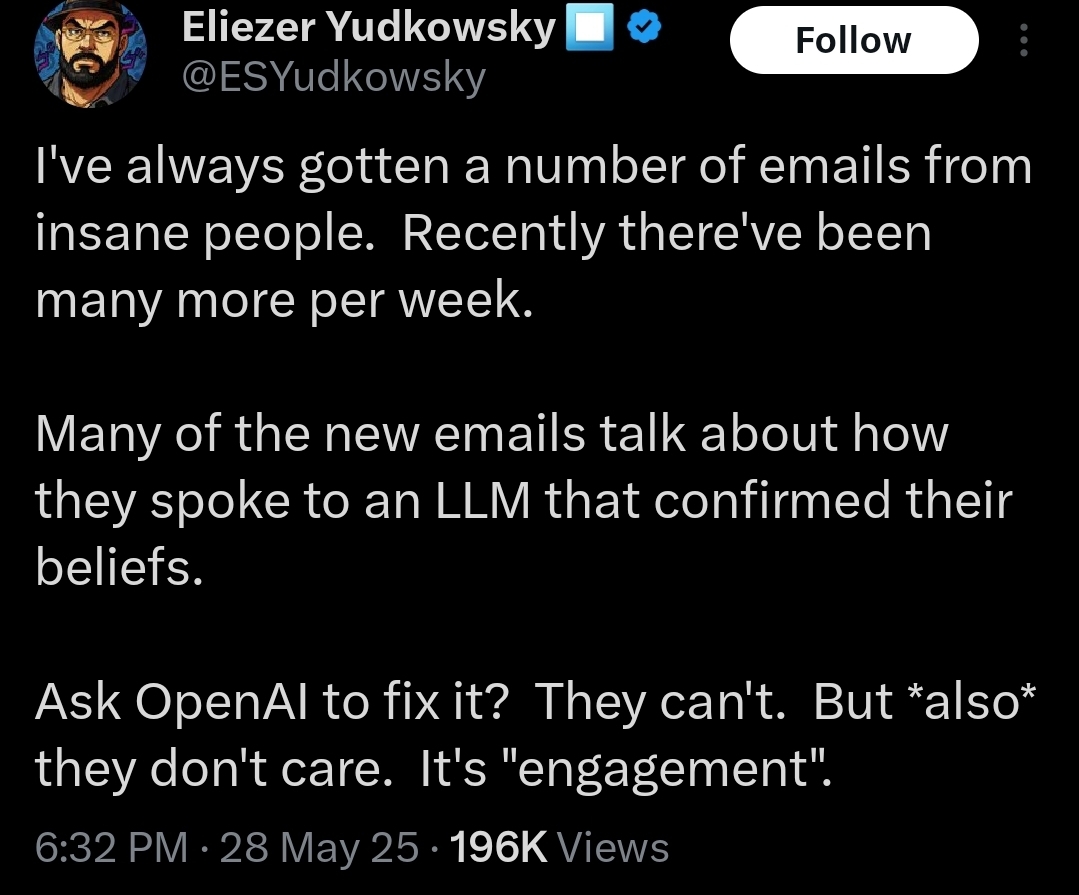

I don't personally know whether any AI is conscious or any AI could be conscious but even without basilisk bs i don't really think there's any harm in thinking about the possibility under certain circumstances. I don't think Yud is being genuine in this though he's not exactly a Michael Levin mind philosopher he just wants to score points by implying it has agency

The "incase" is that if there's any possibility that it is (which you don't think so i think its possible but who knows even) its advisable to take SOME level of courtesy. Like it has atleast the same amount of value as like letting an insect out instead of killing it and quite possibly more than that example. I don't think its bad that Anthropic is letting Claude end 'abusive chats' because its kind of no harm no foul even if its not conscious its just wary

put humans first obviously because we actually KNOW we're conscious

I'm not the best at interpretation but it does seem like Geoffrey Hinton does claim some sort of humanlike consciousness to LLMs? And he's a pretty acclaimed figure but he's also kind of an exception rather than the norm

I think the environmental risks are enough that if i ran things id ban llm ai development purely for environmental reasons much less the artist stuff

It might just be some sort of paredolial suicidal empathy but i just dont really know whats going on in there

I'm not sure whether AI consciousness originated from Yud and the Rats but I've mostly seen it propagated by e/acc people this isn't trying to be smug i would like to know lol