I feel like we need to talk about Lemmy's massive tankie censorship problem. A lot of popular lemmy communities are hosted on lemmy.ml. It's been well known for a while that the admins/mods of that instance have, let's say, rather extremist and onesided political views. In short, they're what's colloquially referred to as tankies. This wouldn't be much of an issue if they didn't regularly abuse their admin/mod status to censor and silence people who dissent with their political beliefs and for example, post things critical of China, Russia, the USSR, socialism, ...

As an example, there was a thread today about the anniversary of the Tiananmen Massacre. When I was reading it, there were mostly posts critical of China in the thread and some whataboutist/denialist replies critical of the USA and the west. In terms of votes, the posts critical of China were definitely getting the most support.

I posted a comment in this thread linking to "https://archive.ph/2020.07.12-074312/https://imgur.com/a/AIIbbPs" (WARNING: graphical content), which describes aspects of the atrocities that aren't widely known even in the West, and supporting evidence. My comment was promptly removed for violating the "Be nice and civil" rule. When I looked back at the thread, I noticed that all posts critical of China had been removed while the whataboutist and denialist comments were left in place.

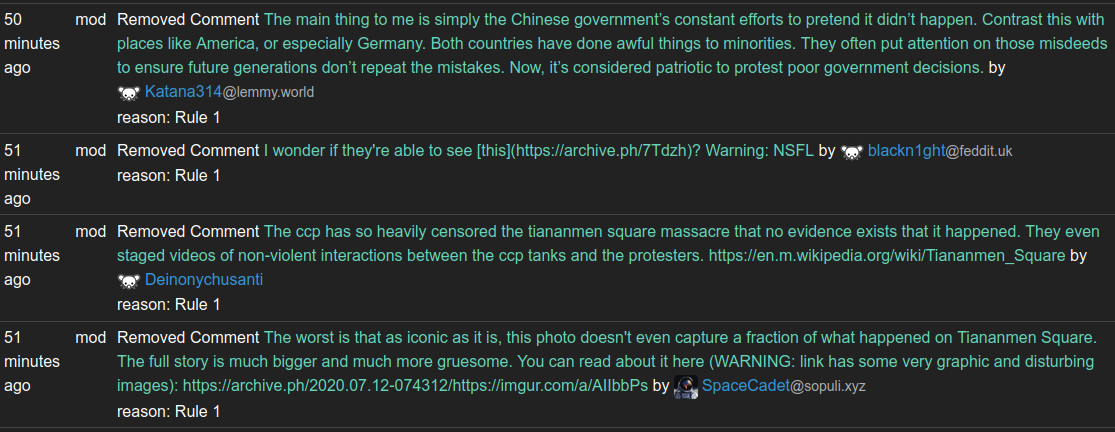

This is what the modlog of the instance looks like:

Definitely a trend there wouldn't you say?

When I called them out on their one sided censorship, with a screenshot of the modlog above, I promptly received a community ban on all communities on lemmy.ml that I had ever participated in.

Proof:

So many of you will now probably think something like: "So what, it's the fediverse, you can use another instance."

The problem with this reasoning is that many of the popular communities are actually on lemmy.ml, and they're not so easy to replace. I mean, in terms of content and engagement lemmy is already a pretty small place as it is. So it's rather pointless sitting for example in /c/linux@some.random.other.instance.world where there's nobody to discuss anything with.

I'm not sure if there's a solution here, but I'd like to urge people to avoid lemmy.ml hosted communities in favor of communities on more reasonable instances.

Write speeds on SMR drives start to stagnate after mere gigabytes written, not after terabytes. As soon as the CMR cache is full, you're fucked, and it stagnates to utterly unusable speeds as it's desperately trying to balance writing out blocks to the persistent area of the disk and accepting new incoming writes. I have 25 year old consumer level IDE drives that perform better than an SMR drive in this thrashing state.

Also, I often use hard drives as a temporary holding area for stuff that I'm transferring around for one reason or another and that absolutely sucks if an operation that normally takes an hour or two is suddenly becoming a multi-day endeavour tying up my computing resources. I was burned once when Seagate submarined SMR drives into the Barracuda line, and I got a drive that was absolutely unfit for purpose. Never again.