No idea where they would land on what to mock and what to take seriously from this whole mess.

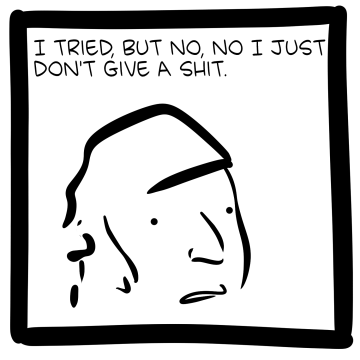

Don't know what they're up to these days but last time I checked I had them pegged as enlightened centrists whose style of satire is having strong beliefs about stuff is cringe more than it is ever having to say anything of even accidental substance about said things.

Reminds me of an SMBC comic that had a setup along the same lines, that if male birth order correlates with homosexuality and family size trends being what they are, the past must have been considerably gayer on average.