El Reg: At last, a use case for AI agents with sky-high ROI: Stealing crypto

Two tastes that go great together!

Big brain tech dude got yet another clueless take over at HackerNews etc? Here's the place to vent. Orange site, VC foolishness, all welcome.

This is not debate club. Unless it’s amusing debate.

For actually-good tech, you want our NotAwfulTech community

El Reg: At last, a use case for AI agents with sky-high ROI: Stealing crypto

Two tastes that go great together!

It's possible we may be catching sight of the first shy movements towards a pivot to robotics:

https://techcrunch.com/2025/07/09/hugging-face-opens-up-orders-for-its-reachy-mini-desktop-robots/

Both developer kits, because it's always a maybe the clients will figure something out type of business model these days.

trying to explain why a philosophy background is especially useful for computer scientists now, so i googled "physiognomy ai" and now i hate myself

Discover Yourself with Physiognomy.ai

Explore personal insights and self-awareness through the art of face reading, powered by cutting-edge AI technology.

At Physiognomy.ai, we bring together the ancient wisdom of face reading with the power of artificial intelligence to offer personalized insights into your character, strengths, and areas for growth. Our mission is to help you explore the deeper aspects of yourself through a modern lens, combining tradition with cutting-edge technology.

Whether you're seeking personal reflection, self-awareness, or simply curious about the art of physiognomy, our AI-driven analysis provides a unique, objective perspective that helps you better understand your personality and life journey.

Prices ranging from 18 to 168 USD (why not 19 to 199? Number magic?) But then you get integrated approach of both Western and Chinese physiognomy. Two for one!

Thanks, I hate it!

Number magic?

they use numerology.ai as a backend

"we encode shit as numbers in an arbitrary way and then copy-paste it into chatgpt"

The web is often Dead Dove in a Bag as a Service innit?

do not eat

trying to explain why a philosophy background is especially useful for computer scientists now, so i googled “physiognomy ai” and now i hate myself

Well, I guess there's your answer - "philosophy teaches you how to avoid falling for hucksters"

Today's bullshit that annoys me: Wikiwand. From what I can tell their grift is that it's just a shitty UI wrapper for Wikipedia that sells your data to who the fuck knows to make money for some Israeli shop. Also they SEO the fuck out of their stupid site so that every time I search for something that has a Finnish wikipedia page, the search results also contain a pointless shittier duplicate result from wikiwand dot com. Has anyone done a deeper investigation into what their deal is or at least some kind of rant I could indulge in for catharsis?

I've seen conspiracy theories that a lot of the ad buys for stuff like this are a new avenue of money laundering, focusing on stuff like pirate sports streaming sites, sketchy torrent sites, etc. But a full scraped, SEOd Wikipedia clone also fits.

A company that makes learning material to help people learn to code made a test of programming basics for devs to find out if their basic skills have atrophied after use of AI. They posted it on HN: https://news.ycombinator.com/item?id=44507369

Not a lot of engagement yet, but so far there is one comment about the actual test content, one shitposty joke, and six comments whining about how the concept of the test itself is totally invalid how dare you.

It seems that the test itself is generated by autoplag? At least that's how I understand the PS and one of the comments about "vibe regression" in response to an error

Anyway, they say it covers Node and to any question regarding Node the answer is "no", I don't need an AI to know webdev fundamentals

Looks like it's been downranked into hell for being too mean to the AI guys, which is weird when its literally an AI guy promoting his AI generated trash.

In the morning: we are thrilled to announce this new opportunity for AI in the classroom

Someone finally flipped a switch. As of a few minutes ago, Grok is now posting far less often on Hitler, and condemning the Nazis when it does, while claiming that the screenshots people show it of what it's been saying all afternoon are fakes.

*musk voice* if machine god didn't want me to fuck with the racism dial, he wouldn't make it

Someone finally flipped a switch. As of a few minutes ago, Grok is now posting far less often on Hitler, and condemning the Nazis when it does, while claiming that the screenshots people show it of what it’s been saying all afternoon are fakes.

LLMs are automatic gaslighting machines, so this makes sense

A Supabase employee pleads with his software to not leak its SQL database like a parent pleads with a cranky toddler in a toy store.

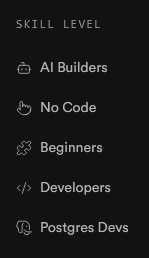

The Supabase homepage implies AI bros are two levels below "beginner", which I found somewhat amusing:

Skill Level

Its also completely accurate - AI bros are not only utterly lacking in any sort of skill, but actively refuse to develop their skills in favour of using the planet-killing plagiarism-fueled gaslighting engine that is AI and actively look down on anyone who is more skilled than them, or willing to develop their skills.

oof! That's hilarious!

Another day, another jailbreak method - a new method called InfoFlood has just been revealed, which involves taking a regular prompt and making it thesaurus-exhaustingly verbose.

In simpler terms, it jailbreaks LLMs by speaking in Business Bro.

I mean, decontextualizing and obscuring the meanings of statements in order to permit conduct that would in ordinary circumstances breach basic ethical principles is arguably the primary purpose of deploying the specific forms and features that comprise "Business English" - if anything, the fact that LLM models are similarly prone to ignore their "conscience" and follow orders when deciding and understanding them requires enough mental resources to exhaust them is an argument in favor of the anthropomorphic view.

Or:

Shit, isn't the whole point of Business Bro language to make evil shit sound less evil?

maybe there's just enough text written in that psychopatic techbro style with similar disregard for normal ethics that llms latched onto that. this is like what i guess happened with that "explain step by step" trick - instead of grafting from pairs of answers and questions like on quora, lying box grafts from sets of question -> steps -> answer like on chegg or stack or somewhere else where you can expect answers will be more correct

it'd be more of case of getting awful output from awful input

Penny Arcade chimes in on corporate AI mandates:

This is so Charlie Stross coded that I tried to read the Mastodon comments.

Lmao I love this Lemmy instance

In the recent days there's been a bunch of posts on LW about how consuming honey is bad because it makes bees sad, and LWers getting all hot and bothered about it. I don't have a stinger in this fight, not least because investigations proved that basically all honey exported from outside the EU is actually just flavored sugar syrup, but I found this complaint kinda funny:

The argument deployed by individuals such as Bentham's Bulldog boils down to: "Yes, the welfare of a single bee is worth 7-15% as much as that of a human. Oh, you wish to disagree with me? You must first read this 4500-word blogpost, and possibly one or two 3000-word follow-up blogposts".

"Of course such underhanded tactics are not present here, in the august forum promoting 10,000 word posts called Sequences!"

Lesswrong is a Denial of Service attack on a very particular kind of guy

I thought you were talking about lemmy.world (also uses the LW acrynom) for a second.

You must first read this 4500-word blogpost, and possibly one or two 3000-word follow-up blogposts”.

This, coming from LW, just has to be satire. There's no way to be this self-unaware and still remember to eat regularly.

NYT covers the Zizians

Original link: https://www.nytimes.com/2025/07/06/business/ziz-lasota-zizians-rationalists.html

Archive link: https://archive.is/9ZI2c

Choice quotes:

Big Yud is shocked and surprised that craziness is happening in this casino:

Eliezer Yudkowsky, a writer whose warnings about A.I. are canonical to the movement, called the story of the Zizians “sad.”

“A lot of the early Rationalists thought it was important to tolerate weird people, a lot of weird people encountered that tolerance and decided they’d found their new home,” he wrote in a message to me, “and some of those weird people turned out to be genuinely crazy and in a contagious way among the susceptible.”

Good news everyone, it's popular to discuss the Basilisk and not at all a profundly weird incident which first led peopel to discover the crazy among Rats

Rationalists like to talk about a thought experiment known as Roko’s Basilisk. The theory imagines a future superintelligence that will dedicate itself to torturing anyone who did not help bring it into existence. By this logic, engineers should drop everything and build it now so as not to suffer later.

Keep saving money for retirement and keep having kids, but for god's sake don't stop blogging about how AI is gonna kill us all in 5 years:

To Brennan, the Rationalist writer, the healthy response to fears of an A.I. apocalypse is to embrace “strategic hypocrisy”: Save for retirement, have children if you want them. “You cannot live in the world acting like the world is going to end in five years, even if it is, in fact, going to end in five years,” they said. “You’re just going to go insane.”

Yet Rationalists I spoke with said they didn’t see targeted violence — bombing data centers, say — as a solution to the problem.

Ah, you see, you fail to grasp the shitlib logic that the US bombing other countries doesn't count as illegitimate violence as long as the US has some pretext and maintains some decorum about it.

“A lot of the early Rationalists thought it was important to tolerate weird people, a lot of weird people encountered that tolerance and decided they’d found their new home,” he wrote in a message to me, “and some of those weird people turned out to be genuinely crazy and in a contagious way among the susceptible.”

Re the “A lot of the early Rationalists" bit. Nice way to not take responsibility, act like you were not one of them and throw them under the bus because "genuinely crazy" like some preexisting condition, and not something your group made worse, and a nice abuse of the general publics bias against "crazy" people. Some real Rationalist dark art shit here.

There is some dark irony here in that the "we must make sure the AI doesnt turn bad" people cant even stop their own people from turning bad after looking at their own ideas. Wonder if they have already went "musk isnt a real Rationalist" (imho he isnt but for some reason LWers seem to like him) after he turned Grok basically into a neonazi (not sure if it is was reported here but Grok is now doing great replacement shit when asked about Jewish "control of the media").

Love how the most recent post in the AI2027 blog starts with an admonition to please don't do terrorism:

We may only have 2 years left before humanity’s fate is sealed!

Despite the urgency, please do not pursue extreme uncooperative actions. If something seems very bad on common-sense ethical views, don’t do it.

Most of the rest is run of the mill EA type fluff such as here's a list of influential professions and positions you should insinuate yourself in, but failing that you can help immanentize the eschaton by spreading the word and giving us money.

It's kind of telling that it's only been a couple months since that fan fic was published and there is already so much defensive posturing from the LW/EA community. I swear the people who were sharing it when it dropped and tacitly endorsing it as the vision of the future from certified prophet Daniel K are like, "oh it's directionally correct, but too aggressive" Note that we are over halfway through 2025 and the earliest prediction of agents entering the work force is already fucked. So if you are a 'super forecaster' (guru) you can do some sleight of hand now to come out against the model knowing the first goal post was already missed and the tower of conditional probabilities that rest on it is already breaking.

Funniest part is even one of authors themselves seem to be panicking too as even they can tell they are losing the crowd and is falling back on this "It's not the most likely future, it's the just the most probable." A truly meaningless statement if your goal is to guide policy since events with arbitrarily low probability density can still be the "most probable" given enough different outcomes.

Also, there's literally mass brain uploading in AI-2027. This strikes me as physically impossible in any meaningful way in the sense that the compute to model all molecular interactions in a brain would take a really, really, really big computer. But I understand if your religious beliefs and cultural convictions necessitate big snake 🐍 to upload you, then I will refrain from passing judgement.

https://www.wired.com/story/openworm-worm-simulator-biology-code/

Really interesting piece about how difficult it actually is to simulate "simple" biological structures in silicon.

One more comment, idk if ya'll remember that forecast that came out in April(? iirc ?) where the thesis was the "time an AI can operate autonomously is doubling every 4-7 months." AI-2027 authors were like "this is the smoking gun, it shows why are model is correct!!"

They used some really sketchy metric where they asked SWEs to do a task, measured the time it took and then had the models do the task and said that the model's performance was wherever it succeeded at 50% of the tasks based on the time it took the SWEs (wtf?) and then they drew an exponential curve through it. My gut feeling is that the reason they choose 50% is because other values totally ruin the exponential curve, but I digress.

Anyways they just did the metrics for Claude 4, the first FrOnTiEr model that came out since they made their chart and... drum roll no improvement... in fact it performed worse than O3 which was first announced last December (note instead of using the date O3 was announced in 2024, they used the date where it was released months later so on their chart it make 'line go up'. A valid choice I guess, but a choice nonetheless.)

This world is a circus tent, and there still aint enough room for all these fucking clowns.

Please, do not rid me of this troublesome priest despite me repeatedly saying that he was a troublesome priest, and somebody should do something. Unless you think it is ethical to do so.